Cognibot

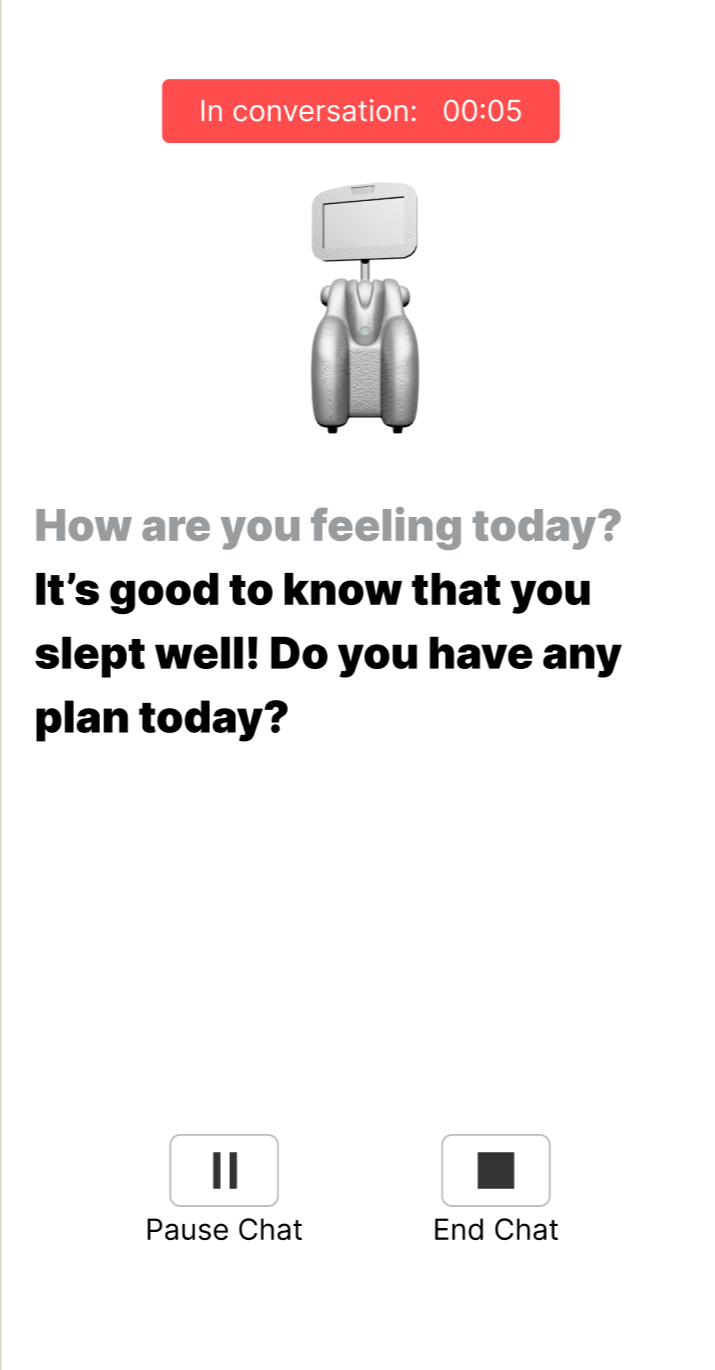

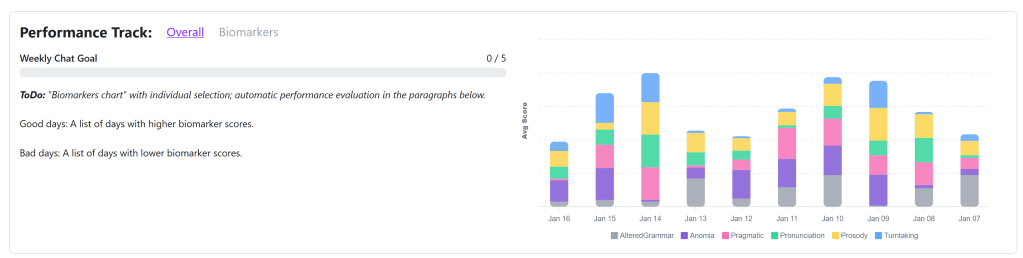

Cognibot is a mobile web application designed to support care for people living with dementia. It captures conversational data from a virtual avatar and visualizes it through a speech tracking mobile application. With a customized LLM trained on real people living with dementia, speech biomarker analysis algorithms, a modern frontend created in collaboration with Human Computer Interaction specialists, and integration with Google Gemini’s speech-to-text and text-to-speech capabilities, Cognibot aims to help analyze symptoms, track care progress, and provide care suggestions.

Skills

React, Python, JavaScript, Django, Artificial Intelligence, TypeScript, TailwindCSS, Docker, Vite, Google Cloud Platform, Google Gemini API, Large Language Models, PostgreSQL, Web Development

As the main frontend developer as well as a major backend developer on this project, I have seen the application undergo many iterations. The earliest design focused more on the biomarker analysis with a minimal frontend. Without a virtual avatar, text-to-speech capabilities, or care suggestions, it was unsophisticated and needed a lot of development.

One of the first things I did was create a React + JavaScript + TailwindCSS frontend with a Django + Python backend, integrating the old code in. This allowed for much greater flexibility, freedom, and functionality. Then came scaffolding pages, shifting from Azure to Gemini, implementing a 3D virtual avatar, and setting up vital application components like the PostgreSQL database, user authentication, APIs to access backend services, and more.

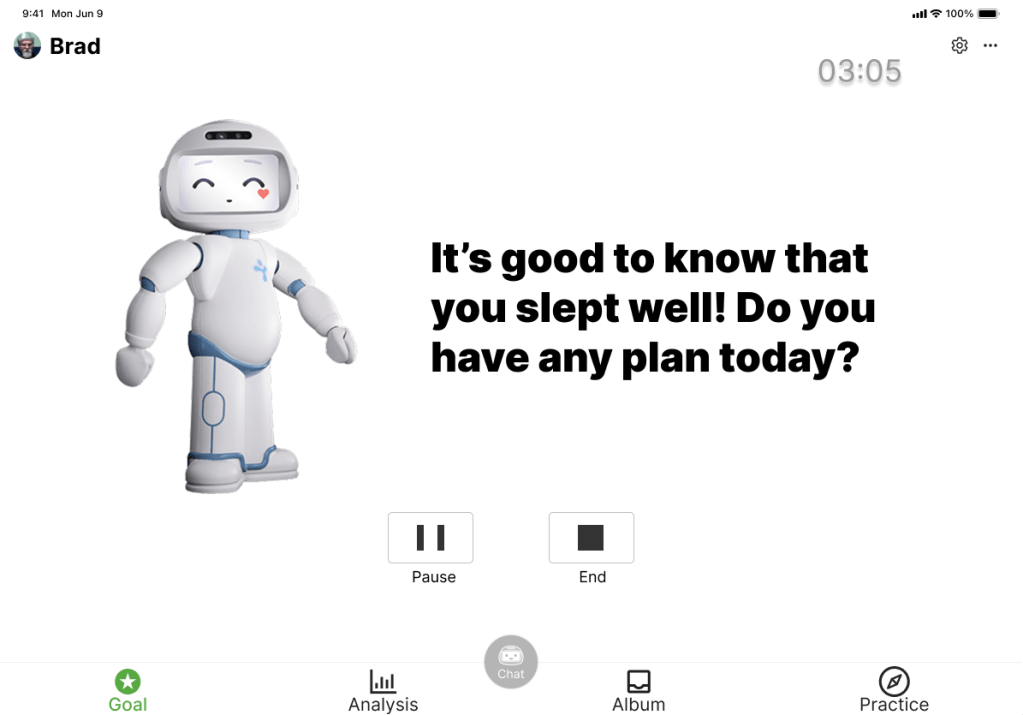

Through various studies, we have improved the application greatly from when I started this project. One of these studies focused on the virtual avatar, evaluating its usability, quality of responses, lag time, and voice. Following this feedback, we developed an agentic AI approach with retrieval augmented generation (RAG) to enhance conversational capabilities.

Exploratory study: What do people want?

We conducted an exploratory study aimed at uncovering what people living with dementia really want to see in our system concept: one that captures conversational data from a robot and visualizes it through a speech-tracking mobile application.

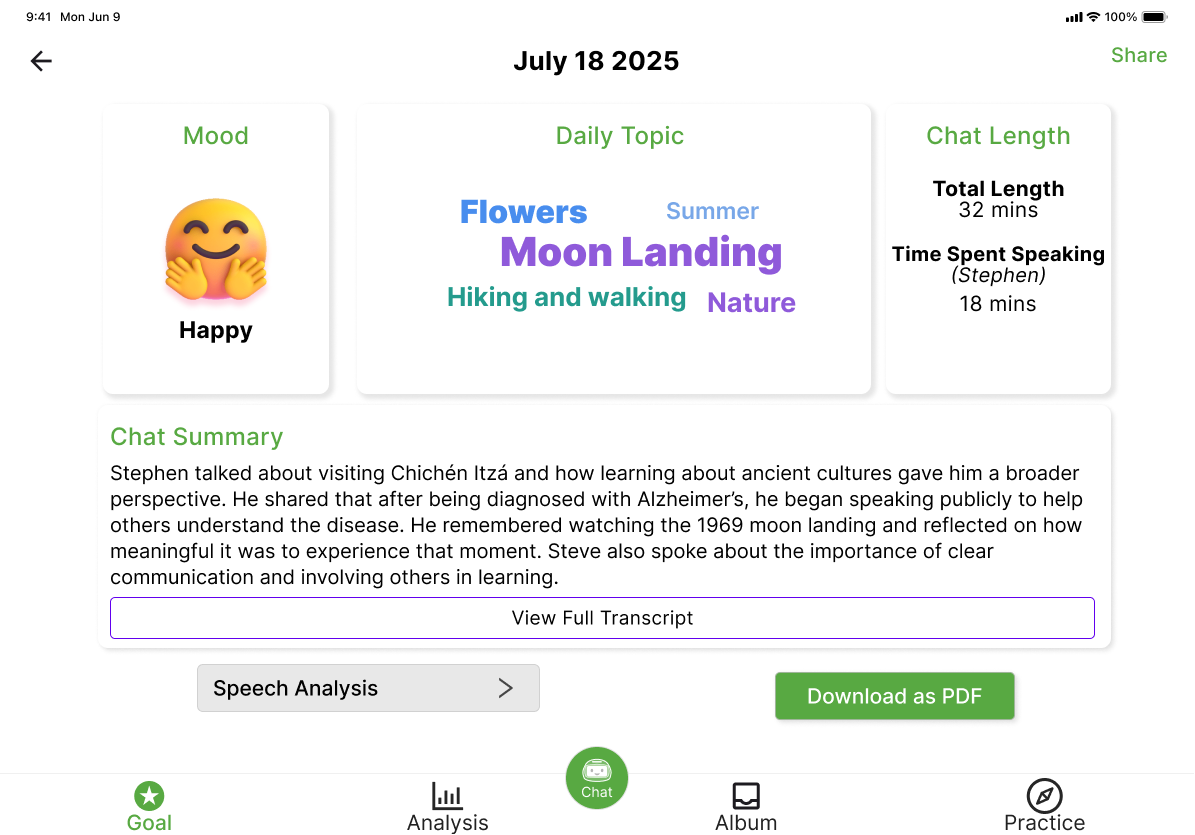

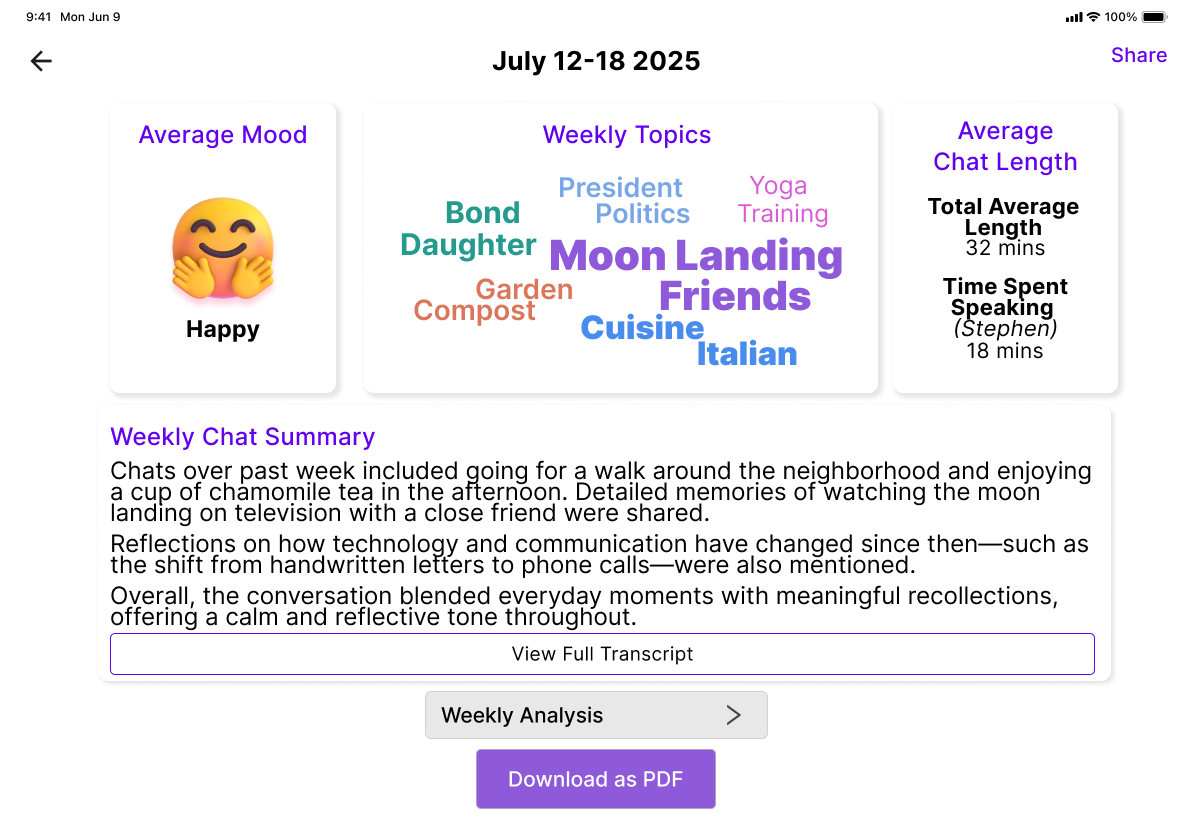

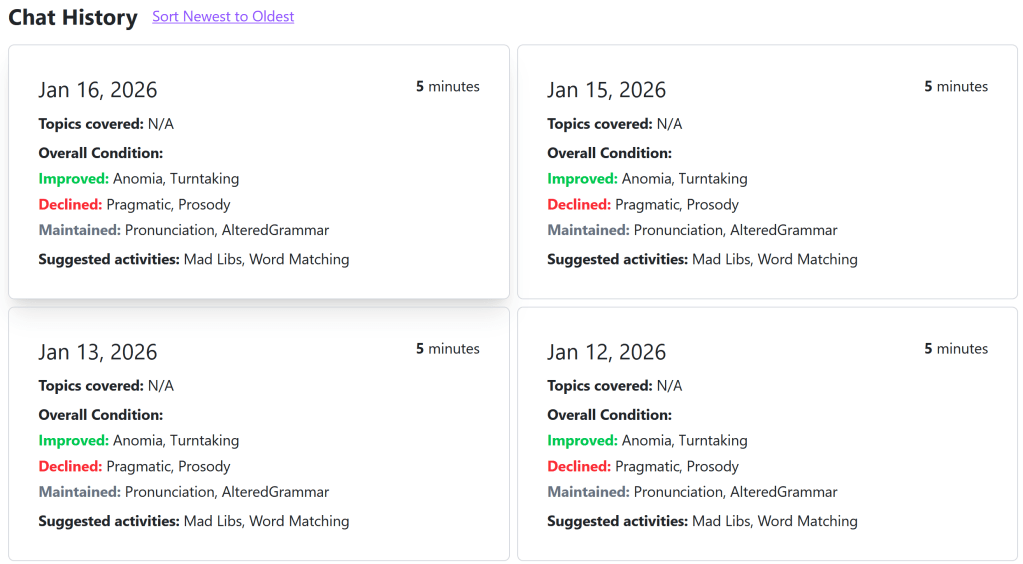

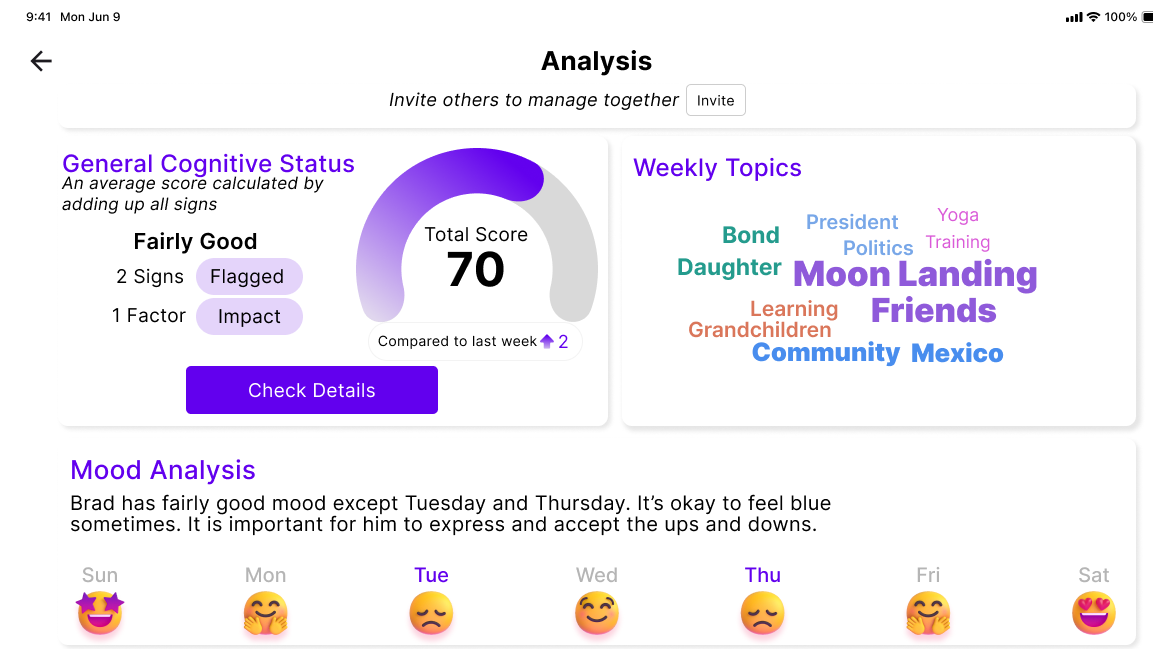

Through reflexive thematic analysis, we found that people living with dementia wanted to use the system to maintain autonomy, especially by talking about their symptoms with the robot and using tracked information as a memory aid. Care partners valued numerical insights into the cognitive progress of people living with dementia only when accompanied by clear calls to action that supported them in their caregiver roles. Simultaneously, in their relational roles as spouses or children, care partners valued tracking memories and discussion points to understand their loved ones better.

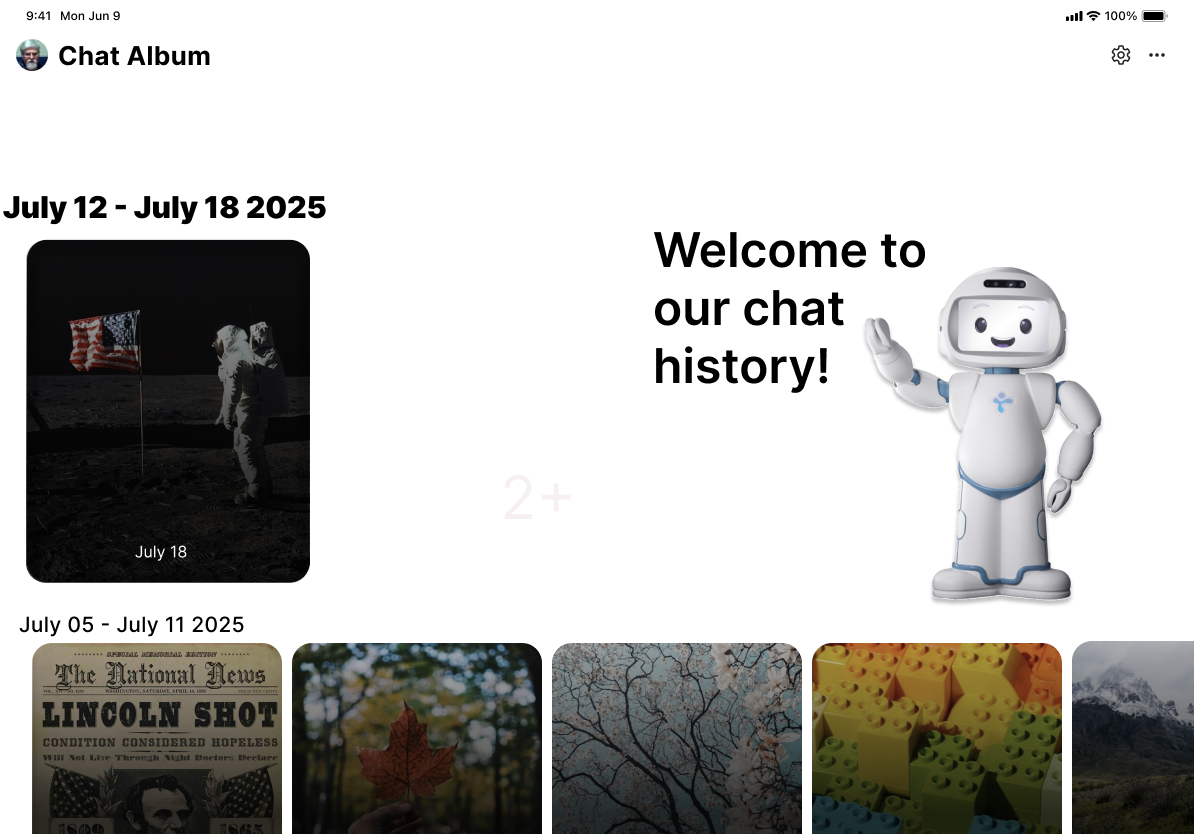

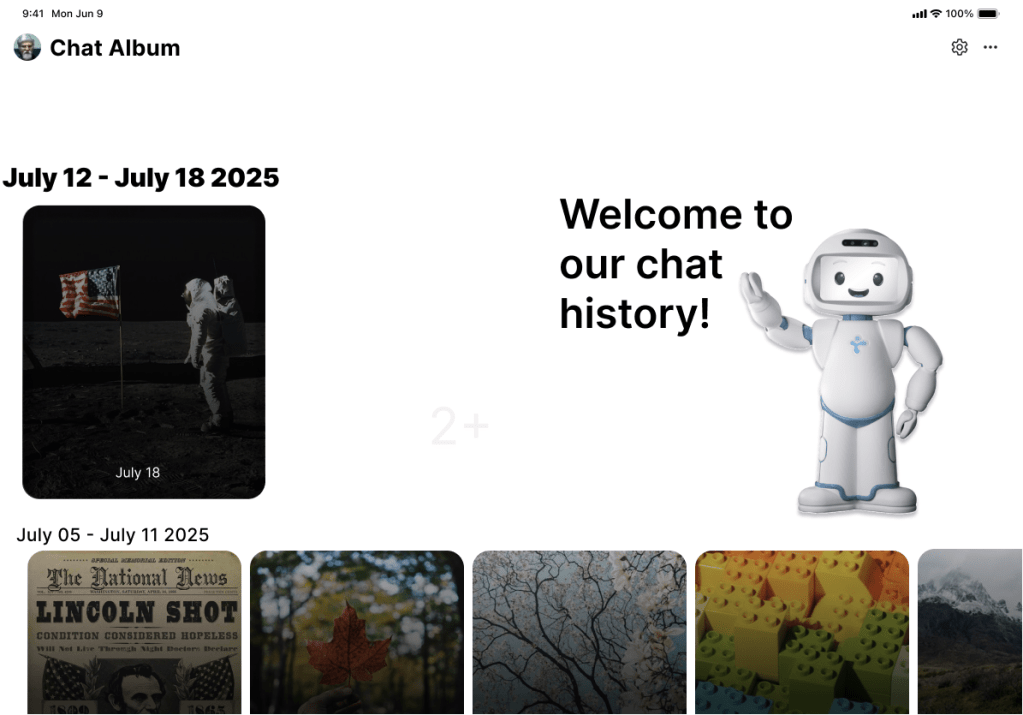

Our newest iteration of Cognibot features memory tracking in the form of the Chat Album, a reimagined chat history page that tells the story of previous conversations through pictures.

The new design is more visuals-focused to make the design simpler and more straightforward to ingest and understand.

What’s Next?

We will be conducting more usability studies aimed at evaluating how much data to show and how much data to keep abstract. We are in the process of integrating a physical robot with the application, allowing for more flexibility and physicality. Our speech analysis algorithms need more fine-tuning, and, of course, there are always more advancements in AI and LLMs that we will be able to incorporate.